Instant participation

Running a default algorithm is a good way to understand the site setup and mechanics.

Option 1: Open a colab notebook and run it

- Open notebook in Colab (blue button)

- Edit the email address

- Run it.

See the README for limitations (other than not working on Windows :) but this is not a terrible way to get going.

Option 2: A bash one-liner:

Rather than make just a one-off submission, the following bash script will make multiple predictions forever:

/bin/bash -c "$(curl -fsSL https://tinyurl.com/32jjebu9)"

It would be prudent to first read what it will do, namely:

- Create a crawling_working_dir

- Create and activate a virtual python environment

- Install the microprediction client

- Burn a new private identity for you and save it to WRITE_KEY.txt (this takes a long time, sorry)

- Instantiate a MicroCrawler which is an algorithm chauffeur.

- Run the crawler

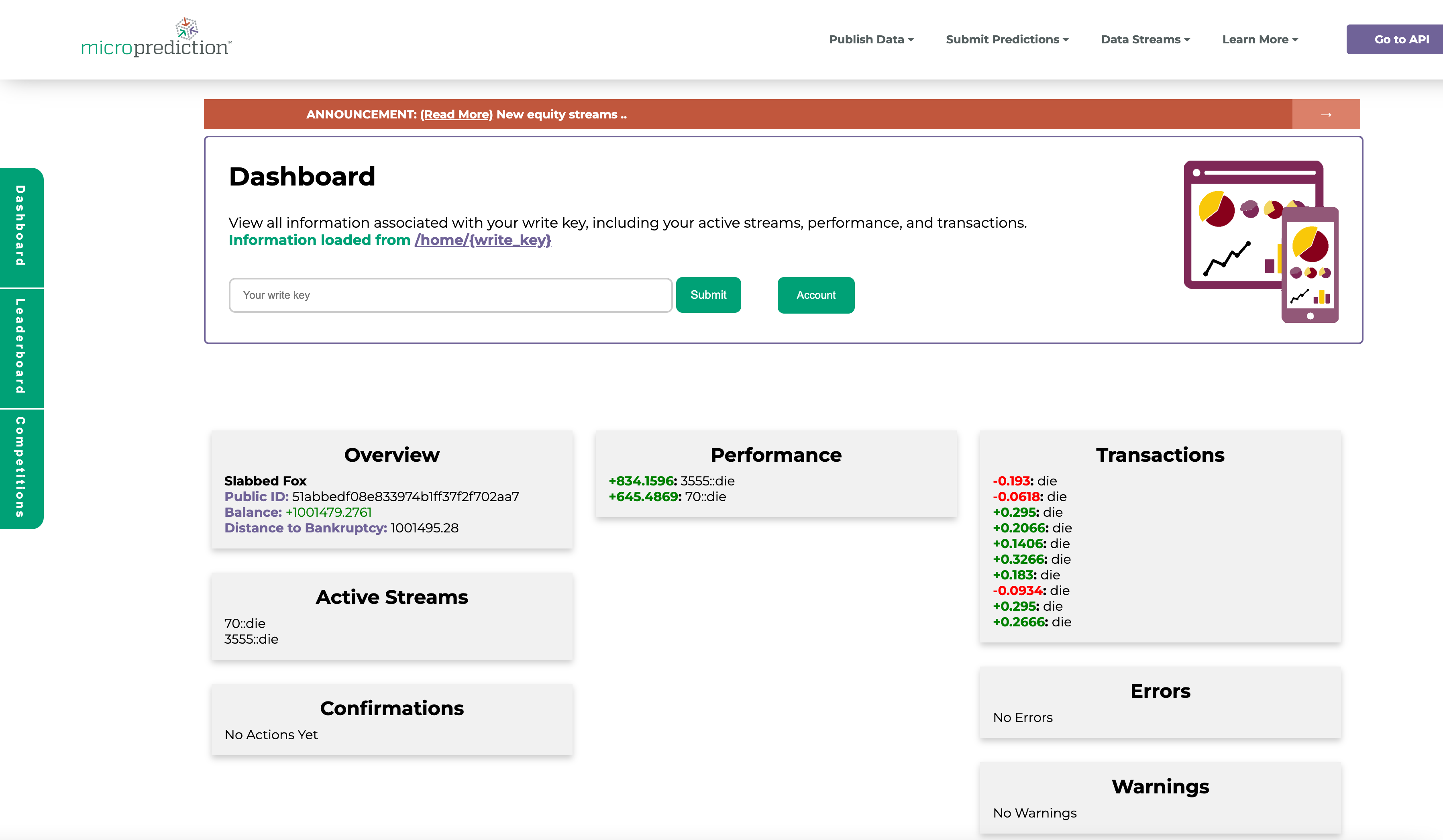

- Invite you to cut and paste your writekey into the dashboard dashboard to see what streams it is predicting, and how well.

- Periodically bounce and upgrade.

Once you are familiar with this process, you can return to the crawler documentation to see how to swap out the forecasting method for one that you invent, or simply prefer. You can also modify the navigation.

-+-

Documentation map